Customer interviews should be one of the easiest ways to learn what your customers need. But apparently, they aren’t. Founders can talk to 50 customers and still build the wrong product.

Why is that?

I scanned through over 20 product and startup communities, and I saw the same patterns:

- Interviews are used for the wrong purposes

- Formal interviews led with biases

- Poor note-taking, missing analysis pipeline, costly specialists

- Everything sounds important, but nothing feels urgent

The failure of customer interviews isn't usually due to "bad" customers. It’s due to a missing real-time feedback loop and inherent human biases. Yet, many still don’t know how to solve these issues.

The Real Reasons Customer Interviews Don’t Work

Customer interviews rarely fail because of one big mistake. They fail because of a set of small, repeatable patterns that quietly distort what teams hear. These patterns show up across startups, agencies, and even mature product teams. Once you learn to recognize them, most interview failures become predictable. Let’s define common mistakes to make them more preventable.

Interviews are used for the wrong purpose

One of the biggest mistakes is treating interviews like a prediction tool. Teams ask hypothetical questions (“Would you use this?”, “Would you pay for this?”), and customers give polite, optimistic answers that don’t match real behavior.

Interviews only work when they explore past behavior and actual pain not predictions.

Fix:

- Focus on the past: Only ask about past behavior and actual pain points rather than future predictions.

- Use open-ended prompts: Let customers walk you through their current operations step-by-step and

- Prepare a framework: Arrive with a solid structure to ensure you ask the right questions every time.

Interview with subtle confirmation bias

Interviewers often unintentionally influence answers. A slightly enthusiastic tone or a “Do you find this useful?” pushes customers toward what they think you want to hear. People naturally want to be agreeable, so they adapt their responses.

Fix:

- Neutralize your language: Remove loaded words like “amazing” or “useful” from your script before starting.

- Record every interview: Review 10 minutes of your own audio to spot leading phrasing.

- Get a second opinion: Ask a teammate or an AI coach to flag leading questions in your script.

Poor note-taking, no analysis pipeline, and expensive specialists

UX researchers on community threads agree that interview notes often “disappear,” and this is the biggest reason for interviews failing. Vague notes, partial quotes, messy impressions. Without a standard analysis workflow, insights don’t turn into patterns, and often, companies can’t afford UX researchers to do this for them.

Fix:

- Automate the pipeline: Record, transcribe, and summarize insights immediately after a block of interviews.

- Deploy AI agents: Use AI tools to handle the heavy lifting of manual transcription and initial synthesis.

Everything is equally important

Some interviews feel great, fun, friendly, lots of storytelling, but lists of pain points and zero decision-driving insight. Without a structure, everything on the list feels equally pressing. Teams leave with lots of notes but no ranking, no urgency, and no sense of what actually drives decisions.

Fix:

- Force a ranking: Ask customers to rank their top three pains before the interview ends.

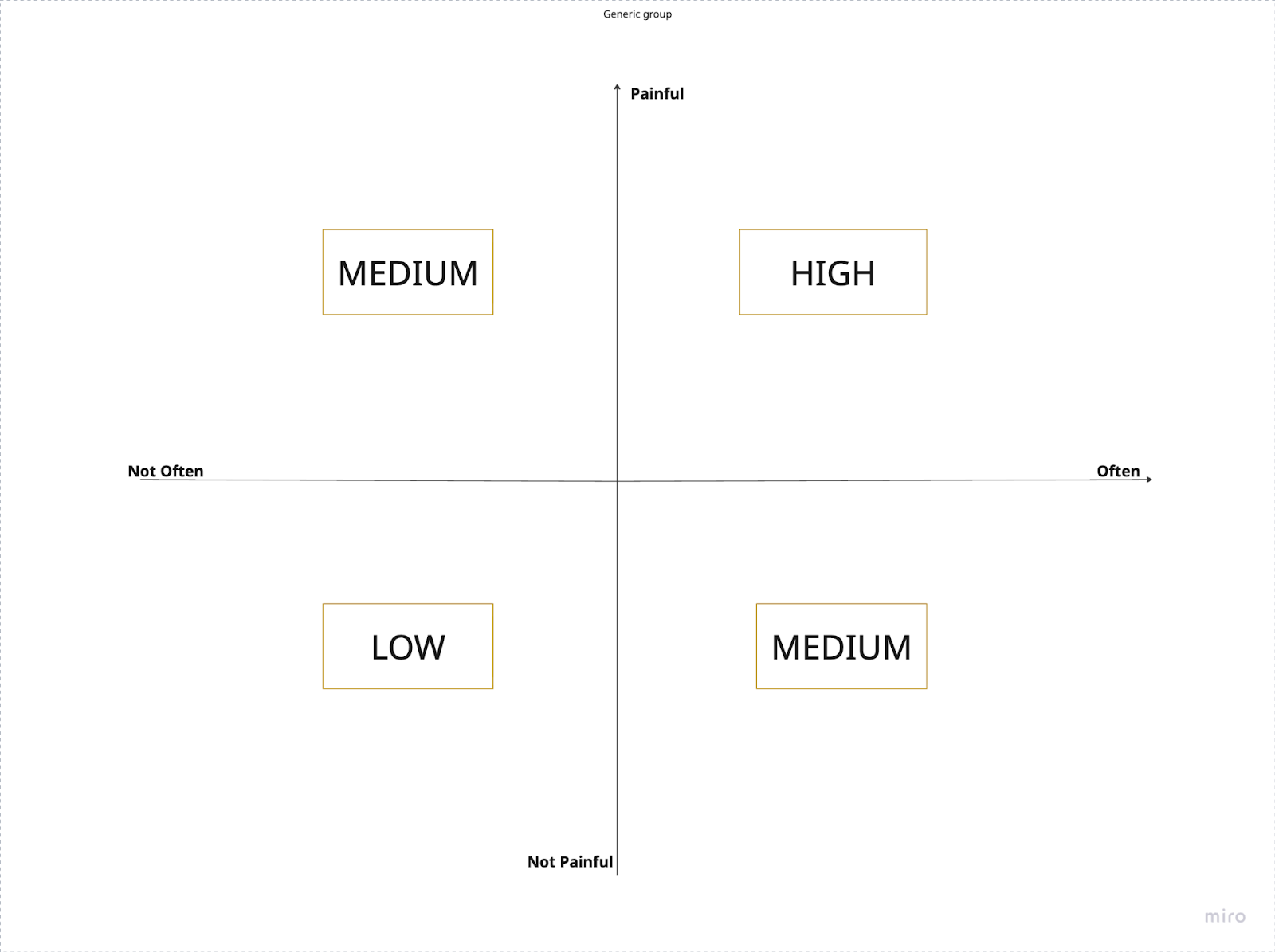

- Use a Priority Matrix: Map issues based on Frequency (how often it happens) vs. Severity (how painful it is).

A Faster Way to Fix Interview Bias, Ops, and Analysis

By this point, the problems are familiar. You need to ask better questions, reduce founder bias, improve prioritization, and standardize analysis. Each recommendation is reasonable on its own. The issue is what happens when teams try to apply all of them at once.

- You add a better script → interviews take longer.

- You add better recruiting, → coordination becomes painful (similar to why customers ignore most surveys)

- You add better analysis → insights get delayed.

- You add training → consistency still varies by interviewer.

Each fix helps in isolation, but together they turn interviews into a slow, expensive, high-effort process that only a few teams can maintain consistently. This is exactly why many founders eventually stop interviewing altogether.

Instead of adding more tasks to your plate, the system handles the repetitive, bias-prone parts of the process. This allows teams to access solid insights with significantly more speed and affordability by eliminating scheduling friction and automatically analysing results without multiplying effort.

Differences Between Human-Led Interviews vs AI Customer Interviewers

AI customer interviewers take on the repetitive, bias-prone, and time-consuming parts of the process, so teams can focus on interpreting insights instead of struggling to collect them. They are here to remove the operational friction that makes “doing interviews properly” unrealistic for most teams.

I reframed some of the most common concerns raised in product and startup communities as questions on the table below, to compare human-led interviews with AI-led interviews.

Perhaps surprisingly, research from institutions like USC (Lucas et al., 2014) shows that people tend to open up more to AI interviewers because they feel less 'evaluation anxiety' than they do when talking to a human.

What Changes When Interviews Run Themselves (and You Get Insights Overnight)

You or any other teams stopped interviewing, not because you stopped caring about customers, but because the process becomes slow, expensive, and mentally exhausting. Leaning heavily on human consistency, time, energy, and interpretation, breaks often occur before the results.

This is why AI interviewers matter. They act as infrastructure rather than a replacement for human judgment. They solve the human struggle to stay neutral, keep conversations from drifting, and allow you to scale without chaos. Instead of isolated chats, you get repeatable, comparable signals.

Customer interviews remain one of the most effective ways to understand what people truly need. Don't let manual research bottleneck your growth. Try Frank AI Researcher and start turning real customer conversations into actionable insights overnight.

.png)